Dag File Processing

Dag File Processing refers to the process of reading the python files that define your Dags and storing them such that the scheduler can schedule them.

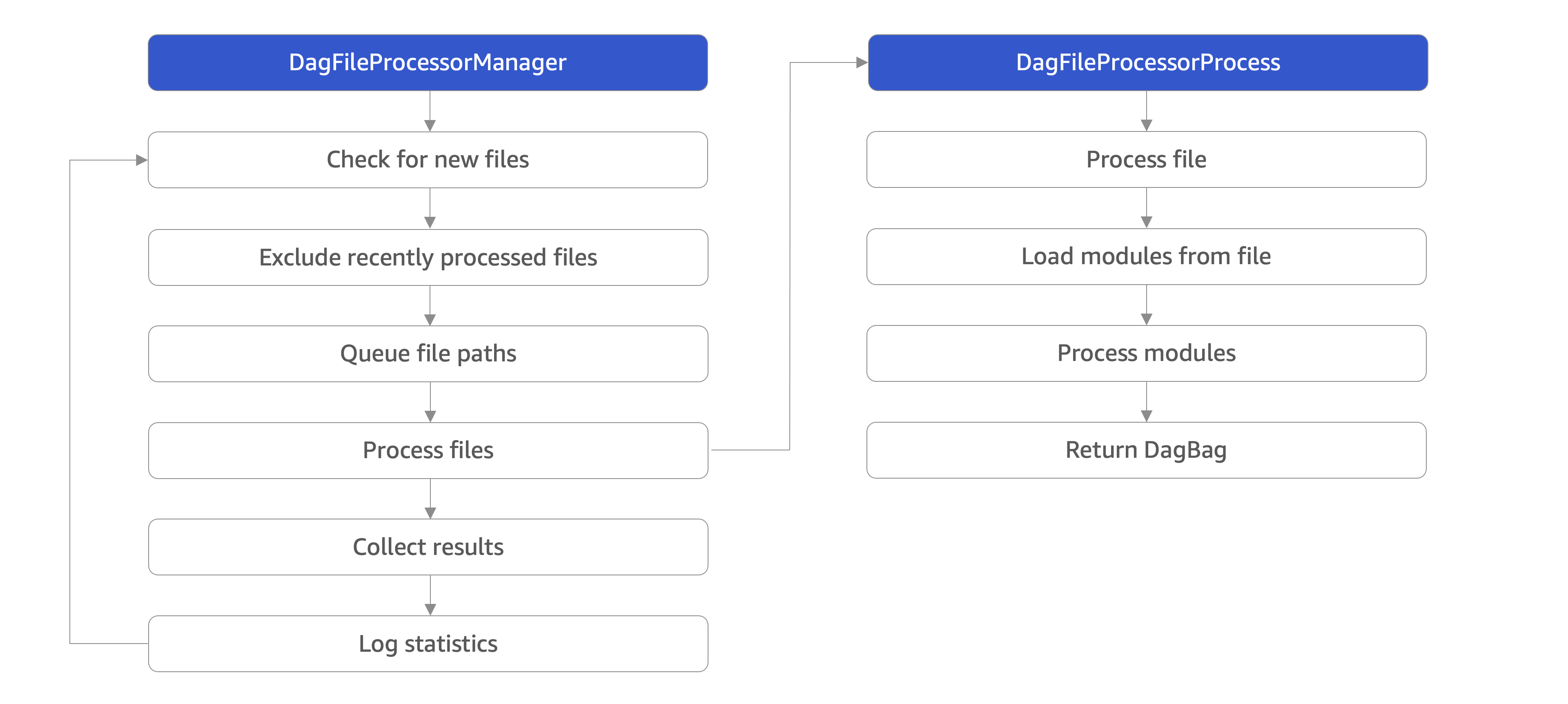

There are two primary components involved in Dag file processing. The DagFileProcessorManager is a process executing an infinite loop that determines which files need

to be processed, and the DagFileProcessorProcess is a separate process that is started to convert an individual file into one or more Dag objects.

The DagFileProcessorManager runs user codes. As a result, it runs as a standalone process by running the airflow dag-processor CLI command.

DagFileProcessorManager has the following steps:

Check for new files: If the elapsed time since the Dag was last refreshed is > refresh_interval then update the file paths list

Exclude recently processed files: Exclude files that have been processed more recently than min_file_process_interval and have not been modified

Queue file paths: Add files discovered to the file path queue

Process files: Start a new

DagFileProcessorProcessfor each file, up to a maximum of parsing_processesCollect results: Collect the result from any finished Dag processors

Log statistics: Print statistics and emit

dag_processing.total_parse_time

DagFileProcessorProcess has the following steps:

Process file: The entire process must complete within dag_file_processor_timeout

The Dag files are loaded as Python module: Must complete within dagbag_import_timeout

Process modules: Find Dag objects within Python module

Return DagBag: Provide the

DagFileProcessorManagera list of the discovered Dag objects

Fine-tuning your Dag processor performance

What impacts Dag processor’s performance

The Dag processor is responsible for continuously parsing Dag files and synchronizing with the Dag in the database In order to fine-tune your Dag processor, you need to include a number of factors:

- The kind of deployment you have

what kind of filesystem you have to share the Dags (impacts performance of continuously reading Dags)

how fast the filesystem is (in many cases of distributed cloud filesystem you can pay extra to get more throughput/faster filesystem)

how much memory you have for your processing

how much CPU you have available

how much networking throughput you have available

- The logic and definition of your Dag structure:

how many Dag files you have

how many Dags you have in your files

how large the Dag files are (remember Dag parser needs to read and parse the file every n seconds)

how complex they are (i.e. how fast they can be parsed, how many tasks and dependencies they have)

whether parsing your Dag file involves importing a lot of libraries or heavy processing at the top level (Hint! It should not. See Top level Python Code)

- The Dag processor configuration

How many Dag processors you have

How many parsing processes you have in your Dag processor

How much time Dag processor waits between re-parsing of the same Dag (it happens continuously)

How many callbacks you run per Dag processor loop

How to approach Dag processor’s fine-tuning

Airflow gives you a lot of “knobs” to turn to fine tune the performance but it’s a separate task, depending on your particular deployment, your Dag structure, hardware availability and expectations, to decide which knobs to turn to get best effect for you. Part of the job when managing the deployment is to decide what you are going to optimize for. Some users are ok with 30 seconds delays of new Dag parsing, at the expense of lower CPU usage, whereas some other users expect the Dags to be parsed almost instantly when they appear in the Dags folder at the expense of higher CPU usage for example.

Airflow gives you the flexibility to decide, but you should find out what aspect of performance is most important for you and decide which knobs you want to turn in which direction.

Generally for fine-tuning, your approach should be the same as for any performance improvement and optimizations (we will not recommend any specific tools - just use the tools that you usually use to observe and monitor your systems):

it’s extremely important to monitor your system with the right set of tools that you usually use to monitor your system. This document does not go into details of particular metrics and tools that you can use, it just describes what kind of resources you should monitor, but you should follow your best practices for monitoring to grab the right data.

decide which aspect of performance is most important for you (what you want to improve)

observe your system to see where your bottlenecks are: CPU, memory, I/O are the usual limiting factors

based on your expectations and observations - decide what is your next improvement and go back to the observation of your performance, bottlenecks. Performance improvement is an iterative process.

What resources might limit Dag processors’s performance

There are several areas of resource usage that you should pay attention to:

FileSystem performance. The Airflow Dag processor relies heavily on parsing (sometimes a lot) of Python files, which are often located on a shared filesystem. The Dag processor continuously reads and re-parses those files. The same files have to be made available to workers, so often they are stored in a distributed filesystem. You can use various filesystems for that purpose (NFS, CIFS, EFS, GCS fuse, Azure File System are good examples). There are various parameters you can control for those filesystems and fine-tune their performance, but this is beyond the scope of this document. You should observe statistics and usage of your filesystem to determine if problems come from the filesystem performance. For example there are anecdotal evidences that increasing IOPS (and paying more) for the EFS performance, dramatically improves stability and speed of parsing Airflow Dags when EFS is used.

Another solution to FileSystem performance, if it becomes your bottleneck, is to turn to alternative mechanisms of distributing your Dags. Embedding Dags in your image and GitSync distribution have both the property that the files are available locally for the Dag processor and it does not have to use a distributed filesystem to read the files, the files are available locally for the Dag processor and it is usually as fast as it can be, especially if your machines use fast SSD disks for local storage. Those distribution mechanisms have other characteristics that might make them not the best choice for you, but if your problems with performance come from distributed filesystem performance, they might be the best approach to follow.

Database connections and Database usage might become a problem as you want to increase performance and process more things in parallel. Airflow is known for being “database-connection hungry” - the more Dags you have and the more you want to process in parallel, the more database connections will be opened. This is generally not a problem for MySQL as its model of handling connections is thread-based, but this might be a problem for Postgres, where connection handling is process-based. It is a general consensus that if you have even medium size Postgres-based Airflow installation, the best solution is to use PGBouncer as a proxy to your database. The Helm Chart for Apache Airflow supports PGBouncer out-of-the-box.

CPU usage is most important for FileProcessors - those are the processes that parse and execute Python Dag files. Since Dag processors typically triggers such parsing continuously, when you have a lot of Dags, the processing might take a lot of CPU. You can mitigate it by increasing the min_file_process_interval, but this is one of the mentioned trade-offs, result of this is that changes to such files will be picked up slower and you will see delays between submitting the files and getting them available in Airflow UI and executed by Scheduler. Optimizing the way how your Dags are built, avoiding external data sources is your best approach to improve CPU usage. If you have more CPUs available, you can increase number of processing threads parsing_processes.

Airflow might use quite a significant amount of memory when you try to get more performance out of it. Often more performance is achieved in Airflow by increasing the number of processes handling the load, and each process requires whole interpreter of Python loaded, a lot of classes imported, temporary in-memory storage. A lot of it is optimized by Airflow by using forking and copy-on-write memory used but in case new classes are imported after forking this can lead to extra memory pressure. You need to observe if your system is using more memory than it has - which results with using swap disk, which dramatically decreases performance. Make sure when you look at memory usage, pay attention to the kind of memory you are observing. Usually you should look at

working memory(names might vary depending on your deployment) rather thantotal memory used.

What can you do, to improve Dag processor’s performance

When you know what your resource usage is, the improvements that you can consider might be:

improve the logic, efficiency of parsing and reduce complexity of your top-level Dag Python code. It is parsed continuously so optimizing that code might bring tremendous improvements, especially if you try to reach out to some external databases etc. while parsing Dags (this should be avoided at all cost). The Top level Python Code explains what are the best practices for writing your top-level Python code. The Reducing Dag complexity document provides some areas that you might look at when you want to reduce complexity of your code.

improve utilization of your resources. This is when you have a free capacity in your system that seems underutilized (again CPU, memory I/O, networking are the prime candidates) - you can take actions like increasing number of parsing processes might bring improvements in performance at the expense of higher utilization of those.

increase hardware capacity (for example if you see that CPU is limiting you or that I/O you use for Dag filesystem is at its limits). Often the problem with Dag processor performance is simply because your system is not “capable” enough and this might be the only way, unless a shared database or filesystem is a bottleneck.

experiment with different values for the “Dag processor tunables”. Often you might get better effects by simply exchanging one performance aspect for another. For example if you want to decrease the CPU usage, you might increase file processing interval (but the result will be that new Dags will appear with bigger delay). Usually performance tuning is the art of balancing different aspects.

sometimes you change Dag processor behavior slightly (for example change parsing sort order) in order to get better fine-tuned results for your particular deployment.

Dag processor Configuration options

The following config settings can be used to control aspects of the Dag processor.

However, you can also look at other non-performance-related Dag processor configuration parameters available at

Configuration Reference in the [dag_processor] section.

file_parsing_sort_mode The Dag processor will list and sort the Dag files to decide the parsing order.

min_file_process_interval Number of seconds after which a Dag file is re-parsed. The Dag file is parsed every

min_file_process_intervalnumber of seconds. Updates to Dags are reflected after this interval. Keeping this number low will increase CPU usage.parsing_processes The Dag processor can run multiple processes in parallel to parse Dag files. This defines how many processes will run.