Multi-Team

Warning

Multi-Team is an experimental/incomplete feature currently in preview. The feature will not be fully complete until Airflow 3.3 and may be subject to changes without warning based on user feedback. See the Work in Progress section below for details.

Multi-Team Airflow is a feature that enables organizations to run multiple teams within a single Airflow deployment while providing resource isolation and team-based access controls. This feature is designed for medium to large organizations that need to share Airflow infrastructure across multiple teams while maintaining logical separation of resources.

Note

Multi-Team Airflow is different from multi-tenancy. It provides isolation within a single deployment but is not designed for complete tenant separation. All teams share the same Airflow infrastructure, scheduler, and metadata database.

When to Use Multi-Team Mode

Multi-Team mode is designed for medium to large organizations that typically have many discrete teams who need access to an Airflow environment. Often there is a dedicated platform or DevOps team to manage the shared Airflow infrastructure, but this is not required.

Use Multi-Team mode when:

You have many teams that need to share Airflow infrastructure

You need resource isolation (Variables, Connections, Secrets, etc) between teams at the UI and API level (see Airflow Security Model for task-level isolation limitations)

You want separate execution environments per team

You want separate views per team in the Airflow UI

You want to minimize operational overhead or cost by sharing a single Airflow deployment

Core Concepts

Teams

A Team is a logical grouping that represents a group of users within your organization. Teams are in part stored in the Airflow metadata database and serve as the basis for resource isolation.

Teams within the Airflow database have a very simple structure, only containing one field:

name: A unique identifier for the team (3-50 characters, alphanumeric with hyphens and underscores)

Teams are associated with Dag bundles through a separate association table, which links team names to Dag bundle names.

Dag Bundles and Team Ownership

Teams are associated with Dags through Dag Bundles. A Dag bundle can be owned by at most one team. When a Dag bundle is assigned to a team:

All Dags within that bundle belong to that team

Tasks in those Dags inherit the team association

All Callbacks associated with those Dags also inherit the team association

Triggers created by those Dags’ tasks inherit the team association

The scheduler uses this relationship to determine which executor to use

Note

The relationship chain is: Task/Callback → Dag → Dag Bundle → Team

Resource Isolation

When Multi-Team mode is enabled, the following resources can be scoped to specific teams:

Variables: Team members can only access variables owned by their team or global variables

Connections: Team members can only access connections owned by their team or global connections

Pools: Pools can be assigned to teams

Resources without a team assignment are considered global and accessible to all teams.

Secrets Backends

Airflow’s secrets backends, including: environment variables, metastore and local filesystem are team-aware. Custom Secrets Backends are supported on a case by case basis.

When a task requests a Variable or Connection, the secrets backend will return a team-specific value, if any. The backend will automatically resolve the correct value based on the requesting task’s team.

Auth Manager

In order to use multi-team mode, the auth manager must be compatible with it. A compatible auth manager must implement two methods:

is_authorized_team: Determines whether a user is authorized to perform a given action on a team. It is used primarily to check whether a user belongs to a team._get_teams: Returns the set of teams defined in the auth manager.

During initialization, Airflow validates that all teams defined in the auth manager are also present in the Airflow metadata database. If any team is missing, Airflow will raise an error.

If the auth manager you are using does not implement these methods, Airflow will raise a

NotImplementedError at runtime.

Example of auth managers compatible with multi-team:

Simple auth manager (recommended for development usage only)

Enabling Multi-Team Mode

To enable Multi-Team mode, set the following configuration in your airflow.cfg:

[core]

multi_team = True

Or via environment variable:

export AIRFLOW__CORE__MULTI_TEAM=True

Warning

Changing this setting on an existing deployment requires careful planning.

Creating and Managing Teams

Teams are managed using the Airflow CLI. The following commands are available:

Creating a Team

airflow teams create <team_name>

Team names must be 3-50 characters long and contain only alphanumeric characters, hyphens, and underscores.

Listing Teams

airflow teams list

This displays all teams in the deployment with their names.

Deleting a Team

airflow teams delete <team_name>

Or to skip the confirmation prompt:

airflow teams delete <team_name> --yes

Warning

A team cannot be deleted if it has associated resources (Dag bundles, Variables, Connections, or Pools). You must remove these associations first.

Configuring Team Resources

Team-scoped Variables

Variables can be associated with teams when created. Tasks belonging to a team can access:

Variables owned by their team

Global variables (no team association)

When a task requests a variable, the system checks for a team-specific variable first.

Team-scoped variables can be created and managed through the Airflow UI or via environment variables.

Via environment variables, you can set team-scoped variables using the format:

# Global variable

export AIRFLOW_VAR_MY_VARIABLE="global_value"

# Team-scoped variable for "team_a"

export AIRFLOW_VAR__TEAM_A___MY_VARIABLE="team_a_value"

The format is: AIRFLOW_VAR__{TEAM}___{KEY} (note: double underscore before team, triple underscore between team and key)

Team-scoped Connections

Connections follow the same pattern as variables. Tasks can access connections owned by their team or global connections.

Team-scoped connections can be created and managed through the Airflow UI or via environment variables.

Via environment variables:

# Global connection

export AIRFLOW_CONN_MY_DATABASE="postgresql://..."

# Team-scoped connection for "team_a"

export AIRFLOW_CONN__TEAM_A___MY_DATABASE="postgresql://..."

The format is: AIRFLOW_CONN__{TEAM}___{CONN_ID} (note: double underscore before team, triple underscore between team and connection ID)

Team-scoped Pools

Pools can be assigned to teams, providing resource isolation for task execution slots. When a pool is assigned to a team:

Only tasks from that team can use the pool

The scheduler validates pool access when scheduling tasks

Tasks attempting to use a pool from another team will fail with an error

Pools without a team assignment remain globally accessible to all teams.

Team-based Executor Configuration

One of the most powerful features of Multi-Team mode is the ability to configure different executors per team or the same executor but configured differently. This allows teams to have dedicated compute resources or execution flows per team.

Similarly to global executors, team-scoped executor configurations also support multiple executors (for example, both

LocalExecutor and KubernetesExecutor), allowing tasks within that team to specify which executor to use. For

details on configuring multiple executors, see Using Multiple Executors Concurrently.

Configuration Format

The executor configuration supports team-based assignments using the following format:

[core]

executor = GlobalExecutor;team1=Team1Executor;team2=Team2Executor

For example:

[core]

executor = LocalExecutor;team_a=CeleryExecutor;team_b=KubernetesExecutor

In this configuration:

LocalExecutoris the global default executorTasks from

team_auseCeleryExecutoror theLocalExecutorTasks from

team_buseKubernetesExecutoror theLocalExecutorTasks from the global scope use the

LocalExecutor

Important Rules

Global executor must come first: At least one global executor (without a team prefix) must be configured and must appear before any team-specific executors.

Teams must exist: All team names in the executor configuration must exist in the database before Airflow starts.

Executor must support multi-team: Not all executors support multi-team mode. The executor class must have

supports_multi_team = True.No duplicate teams: Each team may only appear once in the executor configuration.

No duplicate executors within a team: A team cannot have the same executor configured multiple times.

Example configurations:

# Valid: Global executor with team-specific executors

executor = LocalExecutor;team_a=CeleryExecutor

# Valid: Multiple team-specific executors

executor = LocalExecutor;team_a=CeleryExecutor;team_b=KubernetesExecutor

# Valid: Multiple executors globally and per team

executor = LocalExecutor,KubernetesExecutor;team_a=CeleryExecutor,KubernetesExecutor;team_b=LocalExecutor

# Invalid: No global executor

executor = team_a=CeleryExecutor;team_b=LocalExecutor

# Invalid: Global executor after team executor

executor = team_a=CeleryExecutor;LocalExecutor

# Invalid: Duplicate Team

executor = LocalExecutor;team_a=CeleryExecutor;team_b=LocalExecutor;team_a=KubernetesExecutor

# Invalid: Duplicate Executor within a Team

executor = LocalExecutor;team_a=CeleryExecutor,CeleryExecutor;team_b=LocalExecutor

Aliasing Executors Across Teams

When the same executor type is used at both the global and team level (e.g., LocalExecutor globally and

LocalExecutor for a team), if tasks wish to target the global executor they need a way to distinguish between the

two instances. To accomplish this, you can assign aliases to core executors using the Alias:ExecutorName syntax:

[core]

executor = global_celery_exec:CeleryExecutor;team1=team_celery_exec:CeleryExecutor

With this configuration:

The global

CeleryExecutoris available via the aliasglobal_celery_execThe team_a

CeleryExecutoris available via the aliasteam_celery_execA task in

team_athat setsexecutor="team_celery_exec",executor="CeleryExecutor", orexecutor="airflow.providers.celery.executors.celery_executor.CeleryExecutor"will run on the team executorA task in

team_athat setsexecutor="global_celery_exec"will run on the global executor

# Runs on the global CeleryExecutor via alias

BashOperator(

task_id="uses_global",

executor="global_celery_exec",

bash_command="echo 'running on global executor'",

)

# Runs on team_a's CeleryExecutor via alias

BashOperator(

task_id="use_team_alias",

executor="team_celery_exec",

bash_command="echo 'running on team executor'",

)

# Runs on team_a's CeleryExecutor via class name

BashOperator(

task_id="use_team_classname",

executor="CeleryExecutor",

bash_command="echo 'running on team executor'",

)

# Runs on team_a's CeleryExecutor via full module path

BashOperator(

task_id="use_team_module_path",

executor="airflow.providers.celery.executors.celery_executor.CeleryExecutor",

bash_command="echo 'running on team executor'",

)

# Also runs on team_a's CeleryExecutor (implicit team default)

BashOperator(

task_id="use_default",

bash_command="echo 'running on default team executor'",

)

Aliases work with all core executors (LocalExecutor, CeleryExecutor, KubernetesExecutor, etc) as

well as custom executor module paths. For more information on aliases and multiple executor configuration,

see Using Multiple Executors Concurrently.

Team-specific Executor Settings

When multiple teams use the same executor type (e.g., both team_a and team_b using CeleryExecutor),

each team can provide its own configuration for that executor. This allows teams to point to different Celery

brokers, use different Kubernetes namespaces, or customize any executor setting independently.

Configuration Resolution Order

When a team executor reads a configuration value (e.g., [celery] broker_url), the system checks the

following sources in order, returning the first value found:

Team-specific environment variable —

AIRFLOW__{TEAM}___{SECTION}__{KEY}Team-specific config file section —

[team_name=section]Default values — built-in defaults or

fallbackvalues

The following sources are skipped for team executors (they do not yet support team-based configuration):

Command execution (

{key}_cmd)Secrets backend (

{key}_secret)

Note

Team-specific configuration does not fall back to the global environment variable or global config file

settings. For example, if there is a global CeleryExecutor and a team CeleryExecutor in use, the global

CeleryExecutor may want to increase celery.worker_concurrency from the default of 16 to 32 by

overriding this configuration. However, the team CeleryExecutor should not be forced to 32, it will

continue to use the default of 16 unless it is explicitly overridden with team-specific configuration.

Via Environment Variables

Team-specific configuration can be provided using environment variables with the following format:

AIRFLOW__{TEAM}___{SECTION}__{KEY}

Note the delimiters: double underscore before the team name (part of the AIRFLOW__ prefix), triple

underscore between the team name and section, and double underscore between section and key. The team name

is uppercase.

# team_a's Celery broker URL

export AIRFLOW__TEAM_A___CELERY__BROKER_URL="redis://team-a-redis:6379/0"

# team_b's Celery broker URL

export AIRFLOW__TEAM_B___CELERY__BROKER_URL="redis://team-b-redis:6379/0"

# team_b's Celery result backend

export AIRFLOW__TEAM_B___CELERY__RESULT_BACKEND="db+postgresql://team-b-db/celery_results"

Via Config File

Team-specific settings can also be placed in the airflow.cfg file using sections prefixed with the team

name followed by an equals sign:

# Global celery settings (used by the global executor, NOT as a fallback for teams)

[celery]

broker_url = redis://default-redis:6379/0

result_backend = db+postgresql://default-db/celery_results

# team_a overrides

[team_a=celery]

broker_url = redis://team-a-redis:6379/0

result_backend = db+postgresql://team-a-db/celery_results

# team_b overrides

[team_b=celery]

broker_url = redis://team-b-redis:6379/0

result_backend = db+postgresql://team-b-db/celery_results

Dag Bundle to Team Association

Dag bundles are associated with teams through the Dag bundle configuration. When configuring your Dag bundles, you specify a team_name for each bundle:

[dag_processor]

dag_bundle_config_list = [

{

"name": "team_a_dags",

"classpath": "airflow.dag_processing.bundles.local.LocalDagBundle",

"kwargs": {"path": "/opt/airflow/dags/team_a"},

"team_name": "team_a"

},

{

"name": "team_b_dags",

"classpath": "airflow.dag_processing.bundles.local.LocalDagBundle",

"kwargs": {"path": "/opt/airflow/dags/team_b"},

"team_name": "team_b"

},

{

"name": "shared_dags",

"classpath": "airflow.dag_processing.bundles.local.LocalDagBundle",

"kwargs": {"path": "/opt/airflow/dags/shared"}

}

]

In this example:

Dags in

/opt/airflow/dags/team_abelong toteam_aDags in

/opt/airflow/dags/team_bbelong toteam_bDags in

/opt/airflow/dags/sharedhave no team (global)

Note

The team specified in team_name must exist in the database before syncing the Dag bundles. Create teams first using airflow teams create.

How Scheduling Works

When Multi-Team mode is enabled, the scheduler performs additional logic to determine the correct executor for each task:

Task to Team Resolution: The scheduler resolves the team for each task by following the relationship chain:

Task → Dag (via

dag_id)Dag → Dag Bundle (via

bundle_name)Dag Bundle → Team (via

dag_bundle_teamassociation table)

Executor Selection: Once the team is determined:

If a team-specific executor is configured, use that executor

Otherwise, fall back to the global default executor

Warning

Multi-Team Airflow provides logical isolation for a secure perimeter around teams, not complete isolation. All teams share the same metadata database and common Airflow infrastructure. For absolutely strict security requirements, consider separate Airflow deployments.

Team-scoped Triggerer

When Multi-Team mode is enabled, a triggerer should be scoped to each specific team using the --team-name CLI argument. A team-scoped triggerer processes deferred tasks (triggers) belonging to that team’s Dags. This allows teams to run isolated triggerer instances with independent capacity and failure domains.

Configuration

Start a team-scoped triggerer by passing --team-name:

# Triggerer for team_a only

airflow triggerer --team-name team_a

# Triggerer for team_b only

airflow triggerer --team-name team_b

# Global triggerer — processes triggers from Dags with no team association

airflow triggerer

Startup validation ensures that core.multi_team is enabled and the specified team exists in the database.

Behavior

Team-scoped triggerer (

--team-name team_x): Only picks up triggers whose originating Dag belongs to a bundle mapped toteam_x.Global triggerer (no

--team-name): Only picks up triggers whose originating Dag belongs to a bundle with no team assignment.Multi-Team disabled (

core.multi_team = False):--team-nameis rejected. No filtering occurs and all triggerers process all triggers (existing behavior).

Interaction with --queues

Team filtering and queue filtering are orthogonal — they combine as AND conditions. For example, a triggerer started with --team-name team_a --queues q1,q2 only processes triggers that both belong to team_a and were deferred from tasks in queues q1 or q2.

Note

Ensure that at least one triggerer is running for every team, otherwise that team’s triggers will

remain unassigned until one starts — the same applies to every queue when --queues is used. If you

combine --team-name and --queues, this requirement extends to each team-and-queue combination.

Team-Based Asset Event Filtering

When Multi-Team mode is enabled, asset events are filtered by team membership before they trigger downstream Dag runs. This prevents asset events produced by one team’s Dags from unintentionally triggering Dag runs for a different team.

Default Behavior

By default, a consuming Dag only receives asset events from producers within the same team or from Dags with no team association, i.e. global Dags.

Cross-Team Opt-In with access_control

To allow specific teams to produce events that trigger consumers on a given asset from another team, use the

access_control parameter on the Asset definition with an AssetAccessControl instance:

from airflow.sdk import Asset, AssetAccessControl

shared_data = Asset(

name="shared_data",

uri="s3://bucket/shared/data.csv",

access_control=AssetAccessControl(

producer_teams=["team_analytics", "team_ml"],

),

)

With this configuration, asset events from team_analytics or team_ml will be accepted by any

consuming Dag that schedules on shared_data, in addition to events from the consumer’s own team.

To block global (teamless) Dag producers from triggering consumers, set allow_global=False:

strict_data = Asset(

name="strict_data",

uri="s3://bucket/strict/data.csv",

access_control=AssetAccessControl(

producer_teams=["team_analytics"],

allow_global=False,

),

)

See Cross-team asset event filtering with access_control in the Assets documentation for usage details and validation rules.

Behavioral Rules

The following table describes the complete filtering logic:

Producer |

Consumer |

|

|

Result |

Reason |

|---|---|---|---|---|---|

Team A (DAG) |

Team A |

(any) |

(any) |

✅ Allowed |

Same team |

Team A (DAG) |

Team B |

|

(any) |

❌ Blocked |

Different team, no opt-in |

Team A (DAG) |

Team B |

|

(any) |

✅ Allowed |

Cross-team opt-in |

(no team, DAG) |

Team B |

(any) |

|

✅ Allowed |

Global producer, allow_global is True |

(no team, DAG) |

Team B |

(any) |

|

❌ Blocked |

Global producer blocked by allow_global=False |

Team A (DAG) |

(no team) |

(any) |

(any) |

✅ Allowed |

Teamless consumer accepts events from any DAG producer |

(no team, DAG) |

(no team) |

(any) |

(any) |

✅ Allowed |

Both global |

Team A (API) |

Team A |

(any) |

(any) |

✅ Allowed |

Same team |

Team A (API) |

Team B |

|

(any) |

✅ Allowed |

Cross-team opt-in |

Team A (API) |

(no team) |

(any) |

(any) |

✅ Allowed |

Teamless consumer accepts events from any source |

(no team, API) |

Team B |

(any) |

(any) |

❌ Blocked |

Teamless API user cannot trigger team-bound consumer |

(no team, API) |

(no team) |

(any) |

(any) |

✅ Allowed |

Both global |

Key rules:

Same team: Always allowed.

Global (teamless) DAG producer with

allow_global=True: Triggers all consumers regardless of team.Global (teamless) DAG producer with

allow_global=False: Blocked from triggering team-bound consumers.Teamless API user: Can only trigger teamless consumers. Unlike a teamless DAG — which is deployed by a platform operator and intentionally shared — an API user without a team has no verified team affiliation, so their events are restricted to teamless consumers to prevent unscoped access to team-bound pipelines.

Teamless consumer: Accepts events from any source (DAG or API), regardless of team.

Cross-team via

producer_teams: Allowed when the producer’s team is listed in the asset’sproducer_teams.Multi-Team disabled: All filtering is skipped; existing behavior is preserved.

API-Triggered Events

When a user creates an asset event via the REST API, the user’s team is resolved from the auth manager. The same filtering rules apply, with one distinction: a teamless API user can only trigger teamless consumers, whereas a teamless DAG producer is treated as global and can trigger any consumer.

Important Considerations

Work in Progress

Multi-Team mode is currently an experimental feature in preview. It is not yet fully complete and may be subject to changes without warning based on user feedback. Some missing functionality includes:

Dimensional metrics by team

Some UI elements may not be fully team-aware

Full provider support for executors and secrets backends

Command and Secrets based lookup for team based configuration

Plugins

Global Uniqueness of Identifiers

Dag IDs, Variable keys, and Connection IDs must be unique across the entire Airflow deployment, regardless of which team owns them. This is similar to how S3 bucket names are globally unique across all AWS accounts. You should establish naming conventions within your organization to avoid naming conflicts (e.g. prefix identifiers with the team name)

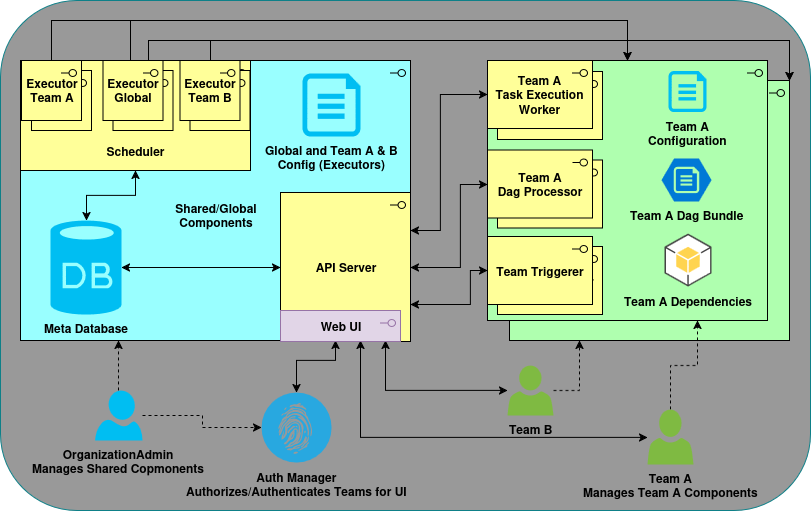

Architecture

The following diagram shows a Multi-Team Airflow deployment with resource isolation between teams.

The components in blue are the shared components and those in green are the team components (note the green shadow box indicating more than one team is present in the architecture).